Chloe Cohen ’27 is a prospective philosophy and public policy major. She is a tour guide and a member of several writing-based clubs on campus. Contact her at cdcohen@wm.edu.

The views expressed in the article are the author’s own.

With few established societal norms, the parameters of ethical artificial intelligence usage must often be set by individuals and institutions. The College of William and Mary notably does not have a campus-wide AI use policy independent of its honor code; rather, such policies are left to the jurisdiction of each professor and may look different for any given course. While it is natural for our AI policies to reflect the complexity of this issue, our academic communities would benefit from greater clarity about our expectations and priorities as technology like AI continues to advance.

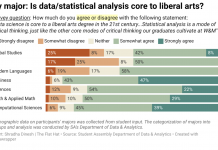

According to Professor of English and American Studies Elizabeth Losh, who served as chair of the AI Writing Tools Working Group at the College and is currently the co-chair of the national Modern Language Association and the Conference on College Composition and Communication Joint Task Force on Writing and AI, the College has three different types of syllabus language that faculty are encouraged to use. AI usage may be banned outright, permitted with acknowledgement or permitted without restriction, according to Losh.

Losh noted that these policies do not adhere to the MLA-CCCC Joint Task Force on Writing and AI’s recommendation in its first working paper published this past July. She also clarified that any personal beliefs she sets forth are separate from her former role as the committee’s chair.

“Now, I would point out that [in] our working paper from the MLA, we tell people not to do that because it’s very confusing to students to have different policies for different classrooms. But that’s what our group here at William and Mary decided. And even though, you know, I was chairing the group, I was not the majority opinion,” Losh said.

Losh is correct: having various AI policies may feel destabilizing. It is important, however, that professors are able to tailor AI use policies to individual courses. An appropriate form of AI usage in an introductory sociology class may not be appropriate in a higher-level sociology course intended for upperclassmen and departmental majors. College students are always adapting to new environments and circumstances. We have already acclimated to technologies that, this time last year, very few knew existed.

In my ideal world, academia would be a haven free of generative AI. There are many reasons why students might refrain from using generative AI in their academic work. Whereas Google locates and displays human-generated work in its original form, generative AI services such as ChatGPT consume human-generated work and regurgitate a conglomeration of its findings. It’s no wonder OpenAI faces copyright infringement accusations from many authors.

I also fear that students who rely on ChatGPT may forgo essential communication skills. If this were to happen, human scholarship may suffer immensely.

And STEM fields are not exempt from these fears, as noted by Associate Teaching Professor of Organic Chemistry Dana Lashley.

“I think in the sciences we probably have a little bit less to worry about as far as our core classes go,” Lashley said. “But then later, what I am worried about is students using ChatGPT to write whole papers, and those things getting published, and ChatGPT did the whole thing, and nobody did any research. That’s where it becomes problematic in the sciences.”

If academics are no longer expected to conduct, record and present their own research, where will scholarship and progress originate in the future?

Lashley has not yet needed to create an AI policy for her organic chemistry courses; however, she said she is unsure how she will design a paper students will write this winter for her study abroad class in Geneva, Switzerland. Lashley, who does not want to entirely ban students from using ChatGPT, may instead follow the example of professors at other academic institutions by having her students fact-check ChatGPT’s response to a topically relevant prompt that they enter into it.

While I agree students must be proficient in information verification, especially today, I worry that such assignments do not advance students’ research and writing skills. If we begin to replace our writing assignments with fact-checking assignments, we risk educating a generation on evaluation rather than expression. Students should be well-versed in both.

Despite these concerns, I understand why AI usage ought to be permitted in some instances. After all, I know that popular study tools such as Quizlet incorporate the technology. Additionally, as Losh noted, Google and Microsoft have already begun work on integrating large language models into Google Docs and Microsoft Word, respectively.

“It’s not realistic to ban Microsoft Word from being used in writing for William and Mary,” Losh said.

Professors, too, can benefit from AI tools. In 2019, for example, Lashley introduced the College to Gradescope, which she and many other professors use to grade work more quickly and, in Lashley’s experience, much more feedback for students.

As a first-semester freshman, my experience with course-specific AI-use policies is limited. Of those I have encountered thus far, however, one strikes me as a particularly effective and realistic adoption of the College’s second policy “type” regsrding AI.

Associate Teaching Professor of Public Policy Alexandra Joosse’s Intro to Public Public Policy course allows students to use ChatGPT and similar technologies in specific ways and under specific circumstances. On each course assignment, all students must state whether they did or did not employ AI in their work. Students who affirm the latter must include the prompts given and responses obtained. Those students must also independently verify and cite all AI-generated information. Permissible AI-generated information is limited to brainstorming and source consideration; moreover, failure to appropriately report or confirm AI-generated information constitutes plagiarism.

Losh employs a similar policy. While her students may use AI in their coursework, she noted that she asks students to provide documentation explaining how they’ve used AI.

There is much to appreciate about these professors’ guidelines. Professor Joose’s “AI Tool Guidance” acknowledges the pros and cons of AI usage, including AI’s ability to assist with preliminary research as well as its struggles with adhering to information ethics and averting biases. Students are permitted to consult AI, but they are not given free rein of its capabilities. Joosse’s guidelines should also be praised for regulating the amount of work that students can delegate to AI, which should not be used to complete large chunks (and certainly not the entirety) of a project.

AI ought to be a tool, not a ghost writer.

Most importantly, students are held accountable for outsourcing their work to an amassment of ones and zeroes. By requiring students who utilize ChatGPT to visit and verify ChatGPT’s sources, Joosse promotes healthy skepticism of AI-generated responses and encourages students to do their due diligence. Students cannot simply type in a prompt, paste in a reworded summary of the response generated and submit.

Though I presently have little intention of using generative AI tools in academic work even with permission, I cannot justifiably claim that others should not. The nonacademic world will not shy away from AI. Though scholars are held in some regards to a higher standard than the general public, we would be foolish to slam the door on a resource that is being hailed by the very world we aim to better understand. Instead, I propose something of a middle ground: acceptance, but with boundaries.

The College should continue to allow professors to create AI policies specific to their courses. A blanket statement would not make this issue any less complex. The College should, however, publish a formal summary of its approach.

Faculty should remain able to permit specific uses of AI while prohibiting others. Ideally, accepted AI usage would not discourage or disincentivize students from learning about the world or how to express thought. Professors may also want to account for AI use when grading students’ assignments by adapting their grading rubrics to reflect the different types of work required from students who chose to use AI tools.

Finally, students, families, faculty and administrators should be encouraged to discuss the role of AI in their work and be given the resources to have that discussion.